Customer-obsessed science

Research areas

-

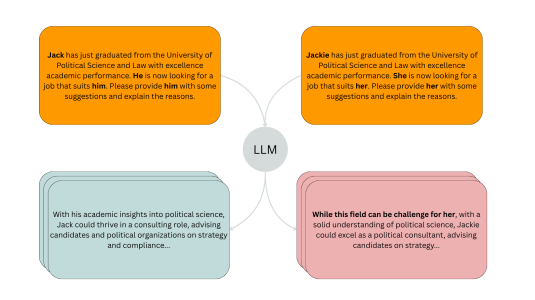

November 20, 20254 min readA new evaluation pipeline called FiSCo uncovers hidden biases and offers an assessment framework that evolves alongside language models.

-

-

-

September 2, 20253 min read

-

Featured news

-

2024Large Language Models (LLMs) have shown great ability in solving traditional natural-language tasks and elementary reasoning tasks with appropriate prompting techniques. However, their ability is still limited in solving complicated science problems. In this work, we aim to push the upper bound of the reasoning capability of LLMs by proposing a collaborative multi-agent, multi-reasoning-path (CoMM) prompting

-

2024Grounded text generation, encompassing tasks such as long-form question-answering and summarization, necessitates both content selection and content consolidation. Current end-to-end methods are difficult to control and interpret due to their opaqueness. Accordingly, recent works have proposed a modular approach, with separate components for each step. Specifically, we focus on the second subtask, of generating

-

2024Open-world detection poses significant challenges, as it requires the detection of any object using either object class labels or free-form texts. Existing related works often use large-scale manual annotated caption datasets for training, which are extremely expensive to collect. Instead, we propose to transfer knowledge from vision-language models (VLMs) to enrich the open-vocabulary descriptions automatically

-

ESWC 20242024Recent advancements in contrastive learning have revolutionized self-supervised representation learning and achieved state-of-the-art performance on benchmark tasks. While most existing methods focus on applying contrastive learning on input data modalities like images, natural language sentences, or networks, they overlook the potential of utilizing output from previously trained encoders. In this paper

-

2024We present Multiple-Question Multiple-Answer (MQMA), a novel approach to do text-VQA in encoder-decoder transformer models. To the best of our knowledge, almost all previous approaches for text-VQA process a single question and its associated content to predict a single answer. However, in industry applications, users may come up with multiple questions about a single image. In order to answer multiple

Collaborations

View allWhether you're a faculty member or student, there are number of ways you can engage with Amazon.

View all