Customer-obsessed science

Research areas

-

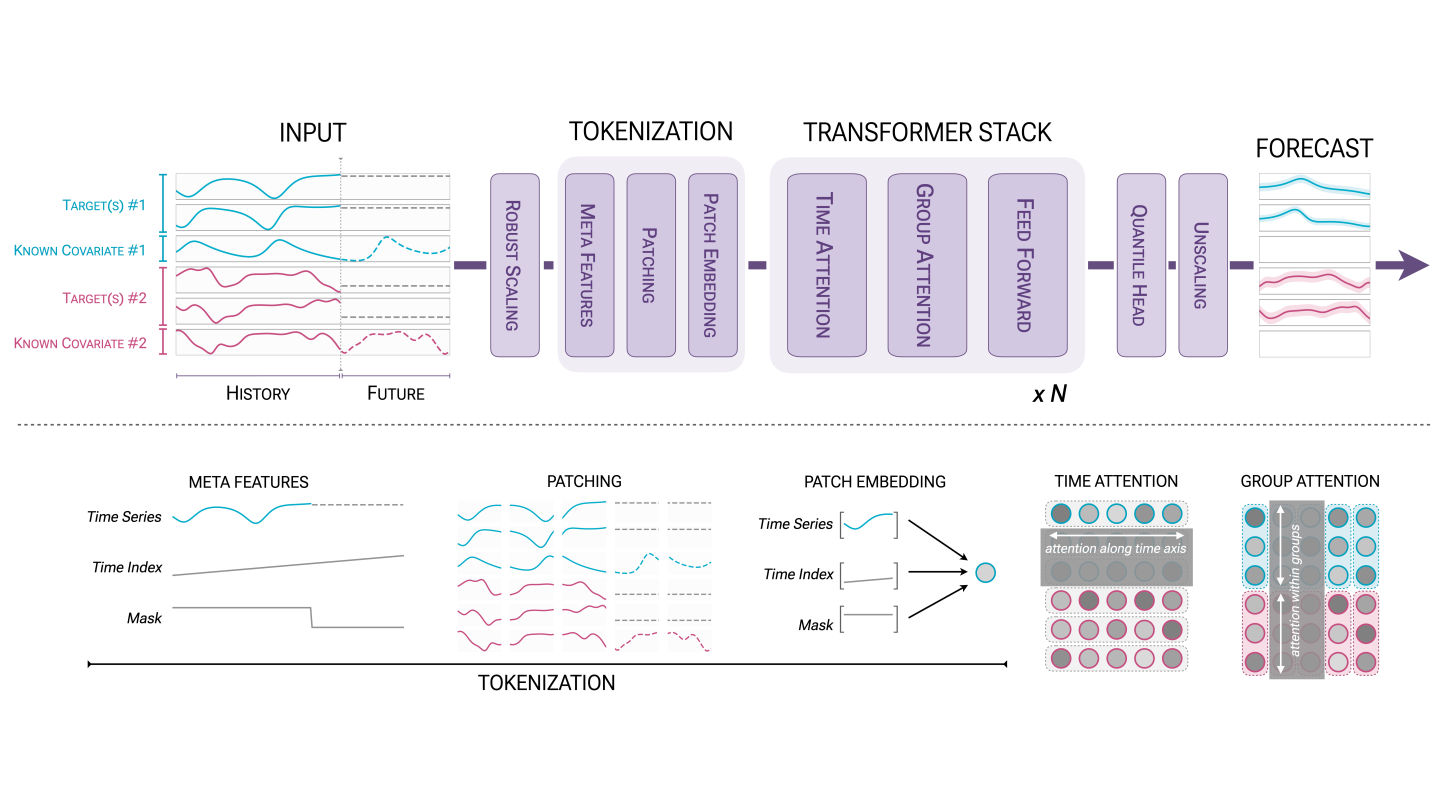

September 26, 2025To transform scientific domains, foundation models will require physical-constraint satisfaction, uncertainty quantification, and specialized forecasting techniques that overcome data scarcity while maintaining scientific rigor.

-

Featured news

-

ICSE 20252025Pricing agreements at AWS define how customers are billed for usage of services and resources. A pricing agreement consists of a complex sequence of terms that can include free tiers, savings plans, credits, volume discounts, and other similar features. To ensure that pricing agreements reflect the customers’ intentions, we employ a protocol that runs a set of validations that check all pricing agreements

-

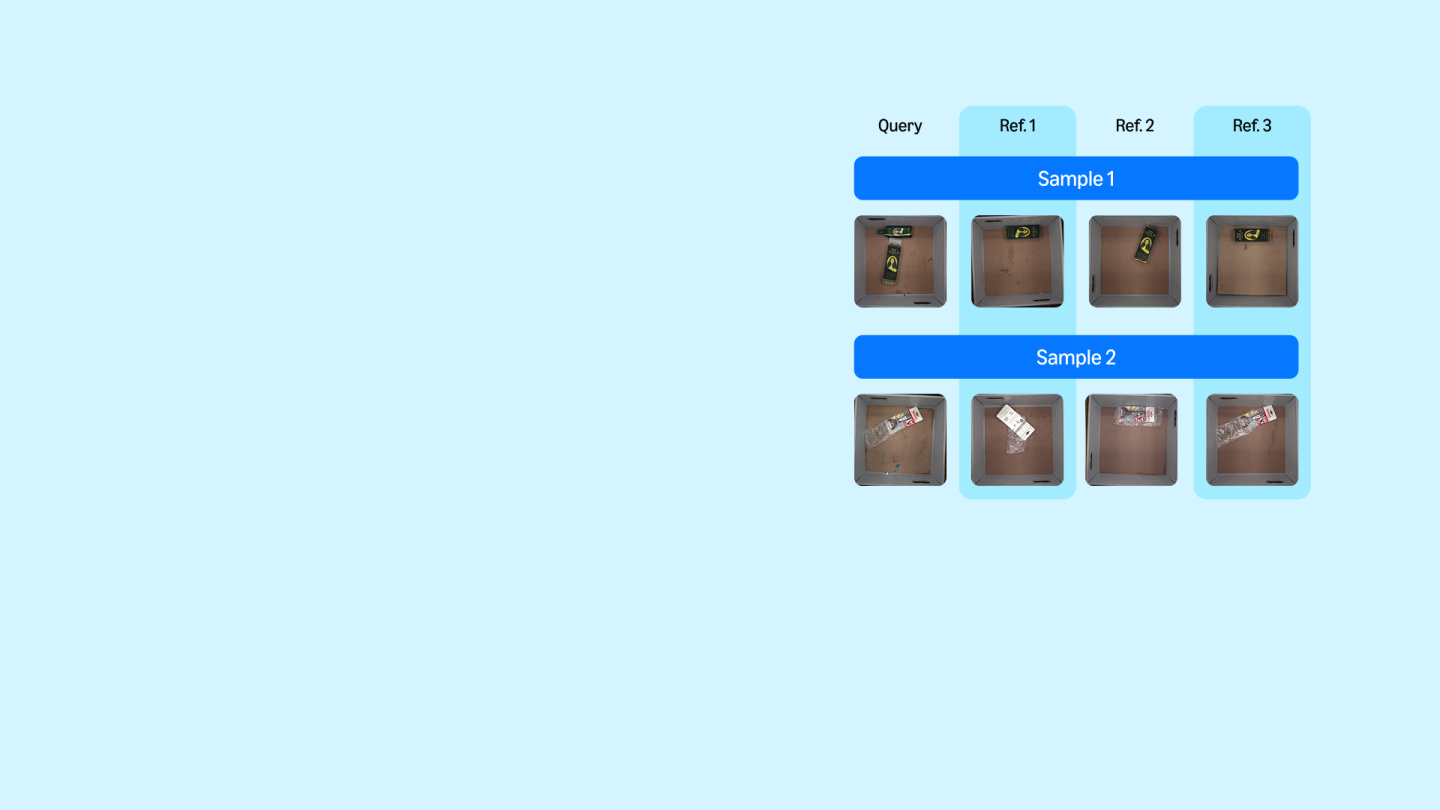

2025Following the great progress in text-conditioned image generation there is a dire need for establishing clear comparison benchmarks. Unfortunately, assessing performance of such models is highly subjective and notoriously difficult. Current automatic assessment of generated images quality and their alignment to text are approximate at best while human assessment is subjective, poorly calibrated and not

-

2025Despite recent advancements in speech processing, zero-resource speech translation (ST) and automatic speech recognition (ASR) remain challenging problems. In this work, we propose to leverage a multilingual Large Language Model (LLM) to perform ST and ASR in languages for which the model has never seen paired audio-text data. We achieve this by using a pre-trained multilingual speech encoder, a multilingual

-

2025Retrieval-augmented generation (RAG) can enhance the generation quality of large language models (LLMs) by incorporating external token databases. However, retrievals from large databases can constitute a substantial portion of the overall generation time, particularly when retrievals are periodically performed to align the retrieved content with the latest states of generation. In this paper, we introduce

-

DCC 20252025Video compression enables the transmission of video content at low rates and high qualities to our customers. In this paper, we consider the problem of embedding a neural network directly into a video decoder. This requires a design capable of operating at latencies low enough to decode tens to hundreds of high-resolution images per second. And, additionally, a network with a complexity suitable for implementation

Collaborations

View allWhether you're a faculty member or student, there are number of ways you can engage with Amazon.

View all