In November of 2019, University of Pennsylvania computer science professors Michael Kearns and Aaron Roth released The Ethical Algorithm: The Science of Socially Aware Algorithm Design. Kearns is the founding director of the Warren Center for Network and Data Sciences, and the faculty founder and former director of Penn Engineering’s Networked and Social Systems Engineering program. Roth is the co-director of Penn’s program in Networked and Social Systems Engineering and co-authored The Algorithmic Foundations of Differential Privacy with Cynthia Dwork. Kearns and Roth are leading researchers in machine learning, focusing on both the design and real-world application of algorithms.

Their book’s central thesis, which involves “the science of designing algorithms that embed social norms such as fairness and privacy into their code,” was already pertinent when the book was released. Fast forward one year, and the book’s themes have taken on even greater significance.

Amazon Science sat down with Kearns and Roth, both of whom recently became Amazon Scholars, to find out whether the events of the past year have influenced their outlook. We talked about what it means to define and pursue fairness, how differential privacy is being applied in the real world and what it can achieve, the challenges faced by regulators, what advice the two University of Pennsylvania professors would give to students studying artificial intelligence and machine learning, and much more.

Q. How has the narrative around designing socially aware algorithms evolved in the past year, and have the events of the past year altered your outlooks in any way?

Aaron Roth: The main thesis of our book, which is that in any particular problem you have to start by thinking carefully about what you want in terms of fairness or privacy or some other social desideratum, and then how you relatively value things like that compared to other things you might care about, such as accuracy—that fundamental thesis hasn't really changed.

Now with the coronavirus pandemic, what we have seen are application areas where how you want to manage the trade-off between accuracy and privacy, for example, is more extreme than we usually see. So, for example, in the midst of an outbreak, contact tracing might be really important, even though you can't really do contact tracing while protecting individual privacy. Because of the urgency of the situation, you might decide to trade off privacy for accuracy. But because the message of our book really is about thinking things through on a case-by-case basis, the thesis itself hasn't changed.

You read the book. Now you can listen to it! Audiobook of "The Ethical Algorithm" ships next week. Coming soon: the action figures. Thanks @TeriSchnaubelt for the excellent reading! @aarothhttps://t.co/BuDjuWJuxO

— Michael Kearns (@mkearnsupenn) July 8, 2020

Michael Kearns: The events of the last year, in particular coronavirus, the resulting restrictions on society and the tensions around these restrictions, and all of the recent social upheaval in the United States, clearly has made the topics of our book much more relevant. The book has focused a lot of attention on the use of algorithms for both good and bad purposes, including things like contact tracing or releasing statistics about people's movements or health data, as well as the use of machine learning, AI, and algorithms more generally for applications like surveillance.

Since our book, at a high level, is about the tensions that arise when there's a battle between social norms like equality or privacy and the use of algorithms for optimizing things like performance or error, I don't think anything in the last year has changed our thinking about the technical aspects of these problems. It's clear that society has been forced to face these problems in a very direct way because of the events of the last year, in a way that we really haven't before. In that sense, our timing was very fortunate because the things we're talking about are more relevant now than ever.

Q. How does that affect your ability to define fairness? Is that something that can ever be a fixed definition, or does it need to be adjusted as events or specific use cases dictate?

Kearns: There's not one correct definition of fairness. In every application you have to think about who the parties are that you're trying to protect, and what the harms are that you're trying to protect them from. That changes both over time and in different scenarios.

Roth: Even before the events of the last year, fairness was always a very context- and beholder- dependent notion. One society might be primarily concerned about fairness by race, and another might be primarily concerned about fairness by gender, and a different community might have other norms. The events of the last year have highlighted cases in which not only will things vary over space or communities, but also over time.

People's attitudes about relatively invasive technologies like contact tracing might be quite different now than they were a year ago. If a year ago I told you, “Suppose there was some disease that some people were catching and the most effective way of tamping it down was to do contact tracing.” Many people might have said, “That sounds really invasive to me”, but now that we've all been through one of the alternatives—being on lock down for six months—people's minds might be changed. We’ve definitely seen norms around privacy for health-related data change.

Q. Standard setting bodies have a significant challenge when it comes to auditing algorithms. Given the scope of that challenge, what needs to happen to allow those groups to do that effectively?

Roth: Although it hasn't happened yet, regulatory agencies are thinking about this, and are reaching out to people like us to help them think about doing this in the right way. I don't know of any regulatory agency that is ready yet to audit algorithms at-scale in sensible ways of the technical sort we discuss in the book. But there are regulatory agencies that have gotten the idea that they should be gearing up to do this, and those agencies have started preliminary movements in that direction.

Kearns: Many of the conversations we've had with standard setting bodies make it clear they're realizing that, collectively, they've technologically fallen behind the industries that they regulate. They don't have the right resources or personnel to do some of the more technological types of auditing. But in these conversations, it's also become clear to us that, even if you could snap your fingers and get the right people and the right resources, it will only be part of a broader framework.

Other important pieces involve becoming more precise about best practices, and also thinking carefully about what those specifications should look like. Let me give a concrete example: One of the things that we argue in our book is that there are many laws and regulations in areas like consumer finance, for instance, that try to get at fairness by restricting what kinds of inputs an algorithm can use. These laws and regulations say, “In order to make sure that your model isn't racially discriminatory, you must not use race as a variable.” But, in fact, not using race as a variable is no guarantee that you won't build a model that's discriminatory by race. In fact, it can actually exacerbate that problem. What we advocate in the book is, rather than restricting the inputs, you should specify the behavior you want as outputs. So instead of saying, “Don't use race”, say instead, “The outputs of the models shouldn't be discriminatory by race.”

Q. Differential privacy has progressed from theoretical to applied science in significant ways in the past few years. How is differential privacy being utilized? How does that help balance the trade-off between privacy and accuracy?

Roth: In the last five years or so, differential privacy has gone from an academic topic to a real technology. For example, the 2020 US Decennial Census is going to release all of its statistical products for the first time, subject to the protections of differential privacy. This is because, by law, the Census is required to protect the privacy of the people it is surveying. The ad hoc techniques used in previous decades to protect the statistics have been shown not to work.

I think that what we will see is that the statistics that the Census releases this year will be more protective of the privacy of Americans. However, in the theme of trade off, using rigorous privacy protections is not without cost. Certain kinds of analyses, such as detailed demographic studies that rely on having highly granular Census data, might now be unavailable under differential privacy. We've seen this play out in the public sphere between downstream users of the data and folks at Census who actually have to hammer out the details.

Folks still try and position these two technologies as inhabiting different tradeoffs on the same privacy scale --- but that is very misleading. SMC is fundamentally about data security. DP is about limiting inference. Success at one of these goals says little about the other. 2

— Aaron Roth (@Aaroth) October 14, 2020

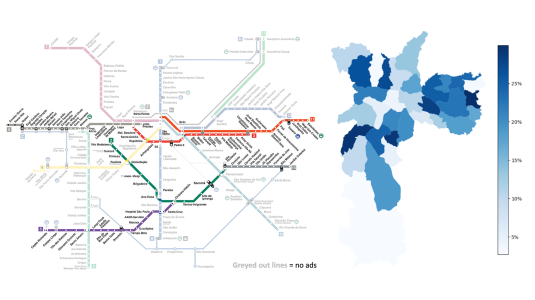

We've seen other interesting uses of differential privacy during the pandemic too. Some tech companies have utilized differential privacy when releasing statistics about personal mobility data gathered during the pandemic. What differential privacy is best at is releasing those kinds of population level statistics: It's exactly designed to prevent you from learning too much about any particular individual. If you want to know how much less people are moving around different cities because of coronavirus restrictions, these data sets let you answer that question without giving up too much privacy for individuals whose mobile devices were providing the data at the most granular level.

Q. So how does differential privacy help protect individual information?

Roth: Oftentimes the things that you will most naturally want to know about a data set are not facts about particular people, but are population level aggregates like, how many people are crowded into my supermarket at 6 a.m. when it opens. If you tell me sufficiently many aggregate statistics, I can do some math and back out particular people's data from that. The fact that aggregate statistics can be disclosive about individual people's data is an unfortunate accident that actually doesn't have too much to do with what you really wanted to learn.

At its most basic level, differential privacy does things like add little bits of noise to the statistics that you're releasing so that what you're telling me is not the exact number of people who were in my local supermarket at 6 a.m., but roughly the number of people who were in the supermarket plus or minus some small number of people. The fortunate mathematical fact is that you can add amounts of noise that are relatively small that still allow you to get good estimates, but are sufficient to wash out the contributions of particular people, making it impossible to learn too much about any particular individual. It lets you get access to these population level questions that you were curious about without incidentally or accidentally learning about particular people, which is the dangerous side.

Kearns: To make this slightly more concrete, say what I want to do is each day tell everybody how many people were in the supermarket a couple blocks from me at 1 p.m. If you happened to be at that supermarket at one o’clock, then your GPS data is one of the data points that goes into the count. You may consider your presence at supermarket at 1 p.m. to be the kind of private information that you don't want the whole world to know. So then let's say that, on a typical day, there might be a couple hundred people at the supermarket, but that I add a number which is an order of magnitude, plus or minus 25. The addition of that random number mathematically and provably obscures any individual’s contributions to that count. I won't be able to look at that count and try to figure out any particular person who was present. If I add a number that's between minus 25 and 25, I can't affect the overall count by 100. I'll still have an accurate count up to some resolution, but I will have provided privacy to everybody who was present at the supermarket and, actually, all the people who weren't present as well.

Q. How are topics like fairness, accountability, transparency, interpretability, and privacy showing up in computer science curriculum at Penn and elsewhere within higher education?

Kearns: When Aaron and I first started working on the technical aspects of fairness in machine learning and related topics, it was pretty sparsely populated. This was maybe six or seven years ago, and there weren't many papers on the topics. There were some older ones, more from the statistics literature, but there wasn't really a community of any size within machine learning that thought about these problems. On the research side, the opposite is now true. All of the major machine learning conferences have significant numbers of papers and workshops on these topics; they have workshops devoted to these topics. There are now standalone conferences about fairness, accountability, and explainability in machine learning that are growing every year. It's a very vibrant, active research community now. Additionally, even though it's still early, it's an important enough topic that there are now starting to be efforts to teach this even at the undergraduate level.

The last two years at Penn, for example, I have piloted a course called The Science of Data Ethics. It’s deliberately called that and not The Ethics of Data Science. What that represents is that it’s about the science of making algorithms that are more ethical by different norms, like fairness and privacy. It's not your typical engineering ethics course, which at some level is meant to teach you to be a good, responsible person in that you look at case studies where things went wrong and you talk about what you would do differently. This class is a science class. It says: Here are the standard principles of machine learning, here's how those standard principles can lead to discriminatory behavior in my predictive models, and here are alternate principles, or modifications of those principles and the algorithms that implement them, that avoid or mitigate that behavior.

Q. Is there a more multidisciplinary approach to this set of challenges?

Roth: It's definitely a multidisciplinary area. At Penn, we've been actively collaborating with interested folks in the law school and the criminology department. So far, we don't really have interdisciplinary undergraduate courses on these topics. Those courses would be good in the long run, but at the research and graduate level we've been having interdisciplinary conversations for a number of years.

In particular, one critique that we try to anticipate in the book, and that we’re very aware of, is that technical work on making algorithms more ethical is only one piece of a much larger sociological, or what some people would call socio-technical, pipeline.

Kearns: Not just at the teaching level, but even in the research community, there's a real melting pot of viewpoints on these topics. Even though our book is focused on the scientific aspects of these issues, we do spend some time mentioning the fact that the science will only take us so far. In particular, one critique that we try to anticipate in the book, and that we’re very aware of, is that technical work on making algorithms more ethical is only one piece of a much larger sociological, or what some people would call socio-technical, pipeline. Machine learning begins with data and ends with a model. But upstream from the data is the entire manner in which the data was collected and the conditions under which it was collected.

One of the things that's very interesting, exciting, and necessary about the dialogue around these kinds of issues is that, even when there's quite a bit to say on them scientifically, you don't want to just put your head down and look at the science. You want to talk to people who are upstream and downstream from the machine learning part of this pipeline because they bring very different perspectives, and can often point out perspectives which can help you change the way you look at things scientifically in a positive way.

Q. If I were a student exploring AI or ML and I wanted to influence this particular conversation, beyond technical skills, what kind of skills should I be developing?

Kearns: What I would very strongly advocate is: think widely, think broadly, think big. Yes, you're going to be doing technical work in particular models and frameworks, and you know you want to get results in those frameworks. But also read what people who are from very, very different fields think about these problems. Go to their conferences, don't just go to the machine learning conferences and to the sub-track on fairness and machine learning. Go to the interdisciplinary conferences and workshops that are deliberately meant to bring together scientists, legal scholars, philosophers, sociologists, and regulators. Hear their views on these problems, keep an ear out for whether they even think you're working on a problem that's relevant or even has a solution.

That's the way I have approached my career: focus on what I'm good at and what I think is interesting from a scientific standpoint, but not in a scientific vacuum. I deliberately expose myself whenever possible to what people from a completely different perspective are thinking about the same set of topics. The good news is that there's a lot of opportunity for that right now. If you work in some branch of material science, it may not be possible to wander out in the world and get diverse perspectives, but everybody has an opinion on AI and machine learning ethics these days, so there is no shortage of sources from which this hypothetical student could go out and find their own technical views challenged or broadened.

Roth: One trap that is very easy for a new PhD student, or even an established researcher, to fall into is to write the introductions to your papers motivated by some kind of fairness problem, but then find yourself solving some narrow technical problem that ultimately has little connection to the world. I am sometimes guilty of this myself, but this is an area where there really are lots of important problems to solve. It's an area where theoretical approaches, if wielded correctly, can be extremely valuable. The thing that’s valuable is to be, sort of, multilingual. It can be difficult to talk to people from other fields because those fields have different vocabularies and a different world view. However, it's important to understand the perspective of these different communities. There are interdisciplinary groups looking at fairness, accountability, and transparency, which bring people together from all sorts of backgrounds to actively work on developing, at the very least, a shared vocabulary—and hopefully a shared world view.

Q. You've become Amazon Scholars fairly recently. What inspired you to take on this role?

Roth: I've spent most of my career as a theorist, so the ways I've been primarily thinking about privacy and fairness are in the abstract. I've had fun thinking about questions like: What kinds of things are, and are not, possible in principle with differential privacy? Or what kinds of semantic fairness promises can you make to people in a way that is still consistent with trying to learn something from the data? The attraction of Amazon and AWS is that it's where the rubber meets the road. Here we are deploying real machine learning products, and the privacy and the fairness concerns are real and pressing.

My hope is that by having a foot in the practice of these problems, not just their theory, not only will I have some effect on how consequential products actually work, but I’ll learn things that will be helpful in developing new theory that is grounded in the real world.

Kearns: I've had a kind of second life in the quantitative finance industry up until I joined Amazon. While I spent time doing practical things in the world of finance, it was more just using my general knowledge in machine learning. The opportunity to come to Amazon and really think about the topics we've been discussing in a practical technological setting seemed like a great opportunity. I'm also a long-term fan and observer of the company. I’ve known people here for many years, and have had great conversations with them. So I’ve watched with great interest over the last decade plus as Amazon grew its machine learning effort from scratch and gradually grew it to have wider and wider applications. It’s now at a point where not only is machine learning used widely within the company to optimize all kinds of processes and recommendations and the like, but it’s also used by customers worldwide in the form of services like Amazon SageMaker.

I have watched this with great interest because when I was studying machine learning in graduate school back in the late 80s, trust me, it was an obscure corner of AI that people kind of raised their eyebrows at. I never would have thought we would reach the point where not only does The Wall Street Journal expect everyone to know what they mean when they write about machine learning, but that it would actually be a product sold at scale.

I've watched these developments from academia and from the world of finance. It seemed like a great opportunity to combine my very specific current research and other interests with an inside look at one of the great technology companies. Like Aaron, my expectations, which were high, have only been exceeded in the time I've spent here.