(Editor’s note: This is the third in a series of articles Amazon Science is publishing related to the science behind products and services from companies in which Amazon has invested. In 2018, the Alexa Fund provided Voiceitt with an accelerator investment.)

Approximately 7.5 million people in the U.S. have trouble using their voices, according to the National Institute on Deafness and Other Communication Disorders. As computer technology moves from text-based to voice-based interfaces, people with nonstandard speech are in danger of being left behind.

Voiceitt, a startup in Ramat-Gan, Israel, says it is committed to ensuring that that doesn’t happen. With Voiceitt, customers train their own, personalized speech recognition models, adapted to their speech patterns, that let them communicate with voice-controlled devices or with other people.

Last week, Voiceitt announced the official public release of its app.

The Alexa Fund — an Amazon venture capital investment program — was an early investor in Voiceitt, and integration with Alexa is built into the Voiceitt app.

“We do see users who get Voiceitt specifically to use Alexa,” says Roy Weiss, Voiceitt’s vice president of products. “They see direct and quite immediate results. From the first command, they unlock capabilities they couldn’t access before.”

“Now that I don’t have to call my mom and dad in, or my aid or my assistant in, and tell them ‘Hey, I need this; I need that’, I can do it independently,” says one Voiceitt user with cerebral palsy. “I use it all the time. … I use it to do everything.”

“After now being speech motor disabled for over three years, including three years of speech dysfunction and two years of no intelligible speech, Voiceitt is a key part of my getting my voice back,” writes another Voiceitt user.

The app

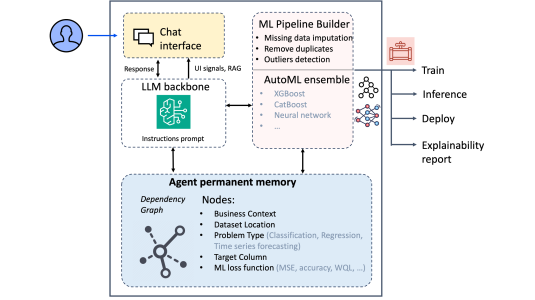

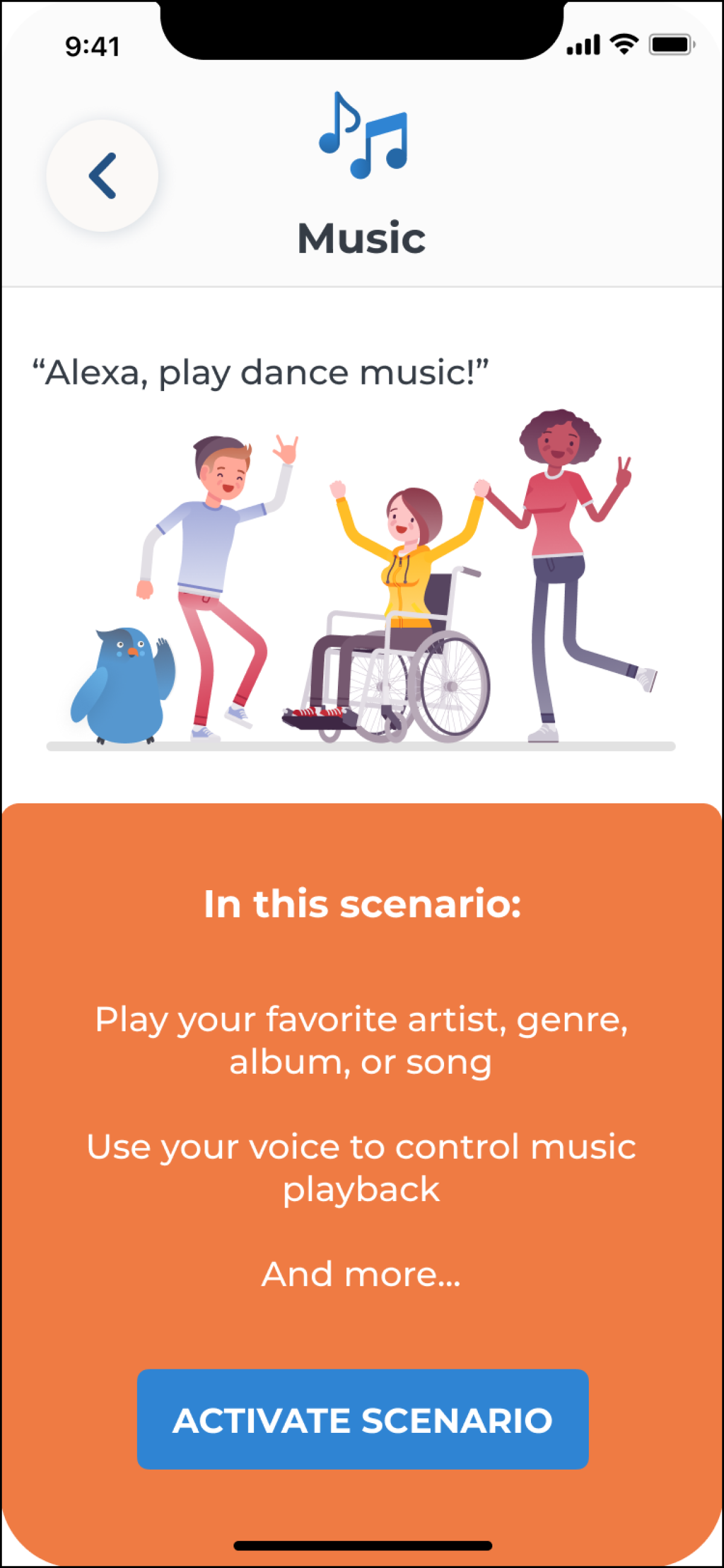

Voiceitt’s interface is an iOS mobile app with two modes: conversation mode lets customers communicate with other people, using synthetic speech and the phone’s speaker; smart-home mode lets customers interact with Alexa.

Each mode has a set of speech categories. For conversation mode, the categories are scenarios such as transportation, shopping, and medical visits; for smart home, they’re Alexa functions such as lights, music, and TV control.

Each category includes a set of common, predefined phrases. In smart-home mode, those phrases are Alexa commands, such as “Lights on” to turn on lights. A command can be configured to trigger a particular action; for instance, “Lights on” might be configured to activate a specific light in a specific room. Customers repeat each phrase multiple times to train a personal speech recognition model.

Modeling nonstandard speech

Recognizing nonstandard speech differs from ordinary speech recognition in some fundamental ways, says Filip Jurcicek, speech recognition team lead at Voiceitt.

When training data is sparse — as it is in Voiceitt’s case, since customers generate it on the fly — the common approach to automatic speech recognition (ASR) is a pipelined method. In that method, an acoustic model converts acoustic data into phonemes, the shortest units of speech; a “dictionary” provides candidate word-level interpretations of the phonemes; and a language model adjudicates among possible word-level interpretations, by considering the probability of each.

But with nonstandard speech, explains Matt Gibson, Voiceitt’s lead algorithm researcher, “we need to look farther than those phoneme-level features. We often see divergence from a normative pronunciation. For instance, if a word starts with a plosive like ‘b’ or ‘p’, the speaker might consistently precede it with an ‘n’ or ‘m’ sound — ‘mp’ or ‘mb’”.

This can cause problems for conventional mappings from sounds to phonemes and phonemes to words. Consequently, Jurcicek says, “We have to look at the phrase as a whole.”

Now that I don’t have to call my mom and dad in, or my aid or my assistant in, and tell them ‘Hey, I need this; I need that’, I can do it independently.

In recent years, most commercial ASR services have moved from the pipelined approach to end-to-end models, in which a single neural network takes an acoustic signal as input and outputs text. This approach can improve accuracy, but it requires a large body of training data.

Typically, end-to-end ASR models use recurrent neural networks, which process sequential inputs in order. An acoustic signal would be divided into a sequence of “frames”, each of which lasts just a few milliseconds, before passing to the recurrent neural net.

In order to “look at the phrase as a whole”, Jurcicek says, Voiceitt instead uses a convolutional neural network, which takes a much larger chunk of the acoustic signal as input. Originally designed to look for specific patterns of pixels wherever in an image they occur, convolutional neural networks can, similarly, look for telltale acoustic patterns wherever in a signal they occur.

“As long as customers are consistent in their pronunciation, this gives us the opportunity to exploit that consistency,” Jurcicek says. “This is where I believe Voiceitt really adds value for the user. Pronunciation doesn’t have to follow a standard dictionary.”

As customers train their custom models, Voiceitt uses their recorded speech both for training and testing. Once the output confidence of the model crosses some threshold, the phrase is “unlocked”, and the customer may begin using it to control a voice agent or communicate with others.

But the training doesn’t stop there. Every time the customer uses a phrase, it provides more training data for the model, which Voiceitt says it continuously updates to improve performance.

The road ahead

At present, Voiceitt’s finite menu of actions means that it’s possible to learn and store separate models for each customer. But Voiceitt plans to scale the service up significantly, so Voiceitt researchers are investigating more efficient ways to train and store models.

“We’re looking at ways to aggregate existing models to come up with a more general background model, which would then act as a starting point from which we could adapt to new users,” Gibson says. “It may be possible to find commonalities among users and cluster them together into groups.”

In the meantime, however, Voiceitt is already making a difference in its customers’ lives. Many people with difficulty using their voices also have difficulty using their limbs and hands. For them, Voiceitt doesn’t just offer the ability to interact with voice agents; it offers the ability to exert sometimes unprecedented control over their environments. In the videos above, the reactions of customers using Voiceitt for the first time testify to how transformative that ability can be.

“It’s really inspiring to see,” Weiss says. “We all feel very privileged to create a product that really has a part in changing users’ lives.”